Intro

As a PowerBI professional, you will surely value tools like DAX Studio and Tabular Editor as they are essential for efficient development of PowerBI datamodels and understanding and improving the code that you’ve written.

DAX Studio allows you to query your datamodel quickly, formats your DAX queries and helps you get an understanding how these queries perform. It can show you how much time is spend in the Formula engine of Analysis Services and how much in the Storage Engine. On top of that it can provide you with all kinds of metrics on your model, the table sizes, cardinality, relationships, integrity violations, fields that consume the most space in the dataset through its VertiPaq analyzer.

This tool merits one or more videos of its own.

Tabular Editor allows you to interact with the datamodel and change any of the available properties, that are not even exposed through PowerBI Desktop, like creating Calculation Groups, quickly hiding many measures, but also has a Best Practices analyser warning you about possible improvements to your model.

Again, it would take too much time to go into detail in this video.

Tabular Tools

When one day an email appears in your mailbox to join the waiting list for something called DAX Optimizer by a company called Tabular Tools, it’s needless to say this could only be a very promising effort.

Tabular Tools is a partnership between SQLBI (founded by Marco Russo and Alberto Ferrari, responsible for DAX Studio, DAX Patterns, etc…) and Tabular Editor (founded by Søren Toft Joensen and Daniel Otykier who created the tool with the same name).

Registration

Yesterday, the wait was finally over, and I received my invite code to sign up for this new tool. They offer various plans that charge either by single Optimizer Run, or 2 subscription plans; one for optimizing a model in PowerBI Desktop, and another that also analyses a model running in the service. Those are limited to 20 Runs per day.

The pricing is not cheap, but in my opinion justified by the value it creates for PowerBI professionals. The time spend on going through your (or let’s face it: someone else’s) code and detecting where some sub-optimal constructs are used, specifically in DAX can be quite time consuming. When you get the full list of issues to fix and afterwards save important time for your users that can now benefit from faster reports, combined with the vast amount of research and knowledge put into creating this type of tool, I would say it’s well worth it.

After subscribing, you receive an activation code, that allows to setup the environment. To my surprise, this was not a tool to download or an add-in, but the DAX optimizer is a website.

Using the tool

The tool is still in private beta, but appears to work very well. When you log-in, you arrive in your workspace, where you find the list of your models.

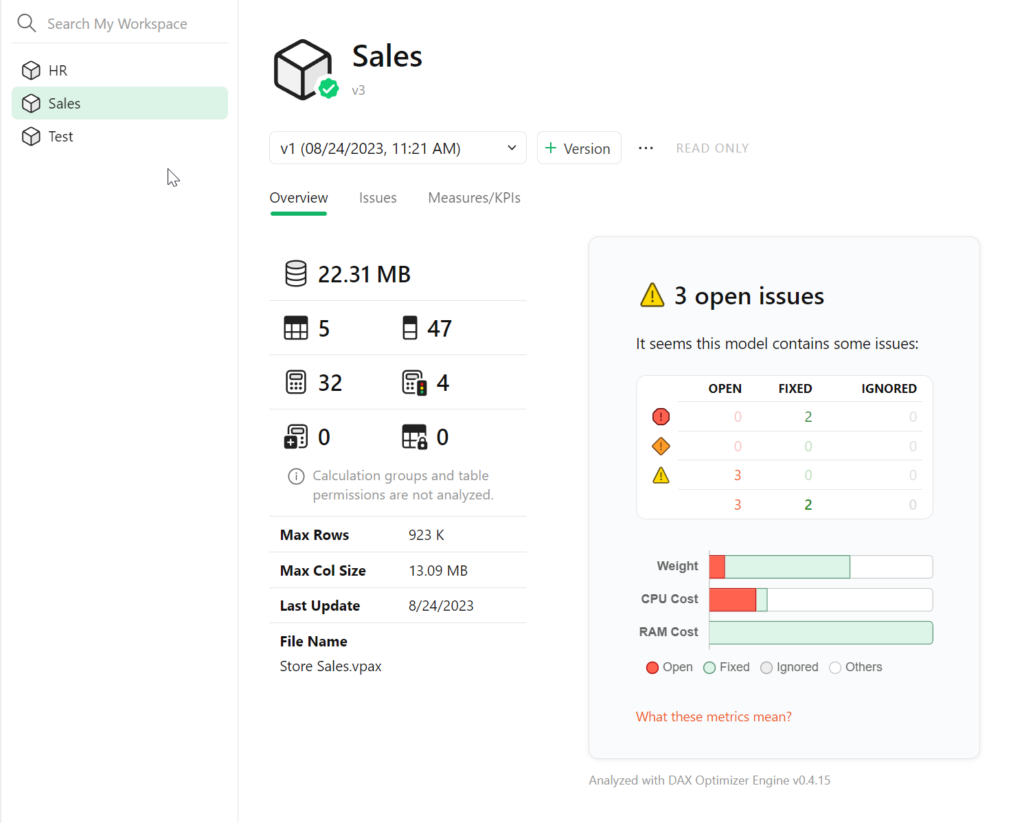

A model is a collection of different versions of your PowerBI datamodel to be analyzed. You can add a version of the model by clicking the + Version button.

This is actually a VertiPaq file, which you can create with the DAX Studio tool. When in PowerBI Desktop with your model open, click on DAX Studio, Advanced and Export Metrics. Make sure you are on the latest version of DAX Studio (3.10 as of the recording of this video). After you saved the file, upload it to DAX Optimizer and it will analyse the structure of your model, without giving any recommendations. It just wants to know what it’s dealing with, number of measures, size, etc..

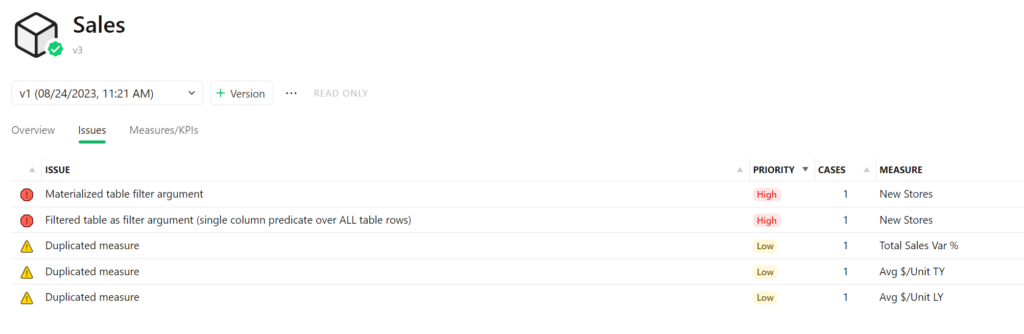

Once ready, you can click on “Analyse this model”, and you need to agree that this will consume one of your included (or purchased) optimizer runs. After a few minutes, the tool comes back with the results in summary, grouped by Priority. Click on Issues to get the full list to work on.

The nature of the issues is described and the Measure it affects is listed. Open the issue to get more detailed information. All affected measures are listed, the code of the selected measure (which can easily be formatted on the bottom right) is shown with the problem highlighted. Expand the issue description to get all details and various examples on how the issue could be addressed. You can also follow the link to the Knowledge Base.

Now that you’ve identified the issue, you can go back to PowerBI Desktop and make the necessary code change. Check if the results make sense and you can export a new VertiPaq file. You can select this measure to be fixed in the model, and you’ll see the impact on the overall open issues. You can also decide this is not something that needs to be addressed and mark it to be ignored.

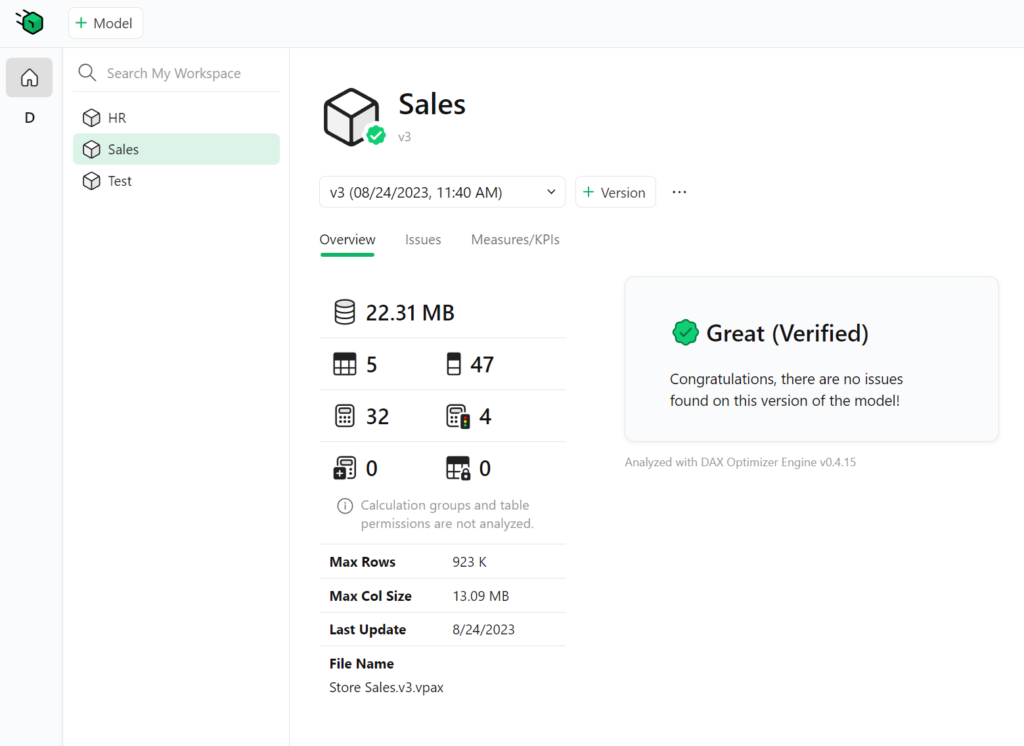

Upload a new version of the model and re-run the analysis to see if this version fixed the issue. You can always move back to previous versions to see the issues and code of the measures at that point in time.

Conslusion

After spending some time with the tool, I was able to efficiently improve my models and notice substantial time savings. When running the performance analyzer in PowerBI Desktop some of my corrections brought calculations back from 2 seconds in the DAX query to 100ms. Many of those little fixes can in the long run optimize a big model and make it more reliable to continue to build on.

I’m curious to see what other functionality the DAX optimizer will bring in the future, but for this pre-release it already does exactly what is promised on the tin: Optimize your DAX.